In a cloud-based analytics dashboard, a 200-millisecond delay is imperceptible. In a retail recommendation engine, half a second of latency is tolerable. On an industrial conveyor belt moving at 2.5 meters per second, 200 milliseconds means the target object has traveled half a meter past the decision point — and the sorting window has closed. In industrial AI, latency is not a performance metric. It is a physical constraint.

This fundamental reality shapes every architectural decision in systems designed to operate at belt speed. From where inference runs to how models are structured to how actuators receive commands, the entire pipeline must be engineered around one unforgiving requirement: the system must perceive, decide, and act before the object moves out of reach.

The Physics of the Problem

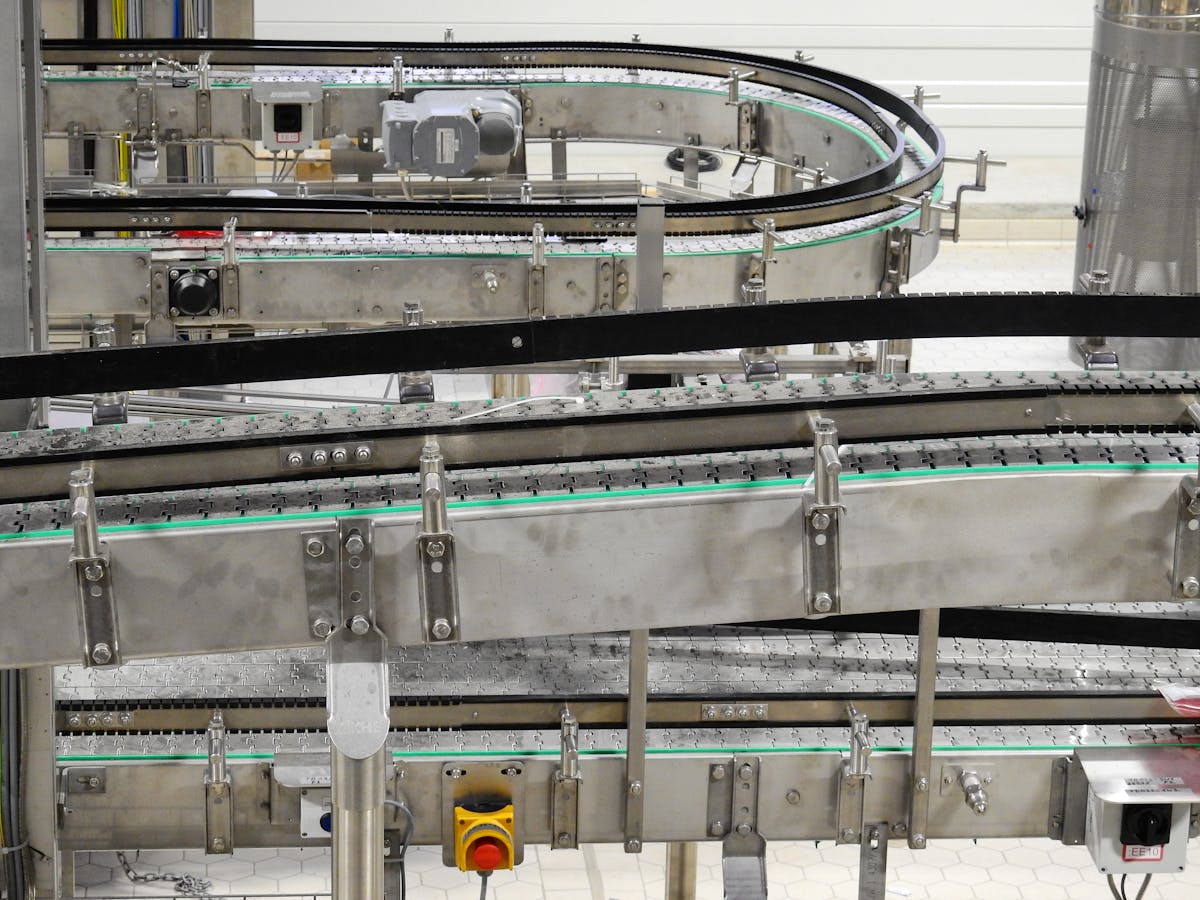

Industrial conveyor belts in sorting and quality inspection applications typically operate at speeds between 1.5 and 3.0 meters per second. In high-throughput recycling facilities, belt speeds can reach 3.5 m/s or higher. At 2.5 m/s, an object moves 25 centimeters in 100 milliseconds.

The intervention window — the time between when an object can be identified and when it passes the actuator (typically an air jet, robotic arm, or diverter gate) — depends on the physical layout of the sorting station. In most configurations, this window ranges from 80 to 150 milliseconds. That is the total time budget for the entire pipeline: image capture, preprocessing, model inference, decision logic, and actuator command.

At a belt speed of 3.0 m/s with a 120mm sorting window, the total available response time is 40 milliseconds. Every millisecond of added latency reduces effective sorting accuracy — not because the AI made a wrong classification, but because the correct decision arrived too late.

This is what separates industrial vision from most other AI applications. The penalty for latency is not a degraded user experience or a slower report. It is a physical miss — an object that should have been sorted correctly passing through unhandled, reducing yield and contaminating output streams.

Edge vs. Cloud: Why the Round Trip Kills

Cloud inference is the default architecture for most enterprise AI workloads, and for good reason. It offers elastic scaling, centralized model management, and access to powerful GPU clusters. But for real-time industrial sorting, the network round trip is disqualifying.

A typical cloud inference round trip — image upload, queue wait, inference, result download — takes 150 to 500 milliseconds under favorable conditions. Add network variability, and worst-case latencies can spike to over a second. Even a dedicated on-premises server rack connected via local network introduces 10 to 30 milliseconds of communication overhead before inference even begins.

The math is unforgiving. If the intervention window is 100 milliseconds total and the network round trip consumes 40 milliseconds, only 60 milliseconds remain for inference, decision logic, and actuator communication. That is a tight budget even for optimized models. And unlike web applications, there is no option to retry or display a loading spinner. The object on the belt does not wait.

This is why Elysium, Neuvana’s industrial sorting platform, processes inference entirely at the edge. The vision system, classification model, and decision engine all run on hardware physically mounted at the sorting station. The path from camera to actuator command passes through local compute only, eliminating network latency from the critical path entirely.

Model Architecture Under Constraint

Running inference at the edge introduces its own challenge: the available compute is limited compared to cloud GPU clusters. Edge devices — whether industrial PCs with embedded GPUs or dedicated AI accelerators — offer a fraction of the throughput available in a data center.

This forces careful model design. The goal is not to build the most accurate classifier possible in isolation, but to build the most accurate classifier that can run within the latency budget on the available hardware. In practice, this means:

- Model quantization. Converting 32-bit floating-point weights to 8-bit integers reduces model size by 4x and dramatically accelerates inference on edge hardware, often with less than 1% accuracy loss for well-calibrated models.

- Architecture selection. Lightweight architectures designed for edge deployment — such as EfficientNet variants and MobileNet derivatives — achieve competitive accuracy at a fraction of the computational cost of larger models like ResNet-152 or Vision Transformers.

- Pipeline parallelism. While one frame is being classified, the next frame is being captured and preprocessed. Overlapping these stages ensures the GPU is never idle waiting for I/O, maximizing throughput within the latency envelope.

- Task-specific optimization. A model that needs to distinguish 15 material categories on a specific waste stream can be smaller and faster than a general-purpose classifier trained on thousands of categories. Domain focus enables efficiency.

Elysium’s architecture reflects these principles. Models are trained in the cloud with full-precision compute, then optimized and deployed to edge hardware as quantized, purpose-built classifiers tuned for specific material streams and belt configurations.

The Full Pipeline: Camera to Actuator

Understanding the latency budget requires tracing the complete pipeline, because inference is only one component:

- Image acquisition: 5–10 ms. Industrial cameras with global shutters capture freeze-frame images of moving objects without motion blur. Triggering is typically synchronized with belt encoders or optical sensors.

- Preprocessing: 2–5 ms. Cropping, normalization, and format conversion prepare the raw image for the model. On modern edge GPUs, this runs as a parallel preprocessing kernel.

- Model inference: 15–40 ms. The classification model processes the image and outputs category probabilities. This is the most computationally intensive step and the primary target for optimization.

- Decision logic: 1–2 ms. Confidence thresholds, sorting rules, and output stream assignments determine the action. Low-confidence classifications can be routed to a rejection stream or flagged for human review.

- Actuator command: 5–15 ms. The physical sorting mechanism — air jet, paddle, or robotic picker — receives the command and executes. Pneumatic actuators have their own mechanical response time that must be accounted for.

In total, a well-optimized pipeline completes the full cycle in 30 to 70 milliseconds, leaving margin within a typical 100-millisecond intervention window. That margin is not luxury — it accounts for variance in belt speed, object positioning, and the occasional inference that takes longer than average.

Why Milliseconds Compound

The impact of latency is not linear. A sorting system operating at 95% accuracy within its latency budget does not degrade gracefully to 90% when latency increases by 20%. Instead, the objects that fall outside the intervention window are missed entirely — they are not sorted incorrectly, they are not sorted at all. This creates a bimodal outcome: objects processed within the window are handled with full model accuracy, and objects outside the window are lost.

At production scale, the numbers add up quickly. A facility processing 10 tons of material per hour on a belt with 40 objects per second will see 144,000 objects in an hour. If latency causes 3% of objects to exceed the intervention window, that is 4,320 missed sorting opportunities per hour — material that enters the wrong output stream, reducing purity and recovery rates.

This is why industrial AI system design starts with the latency budget and works backward. The belt speed and physical layout define the window. The window defines the maximum pipeline latency. The latency budget defines the edge hardware, model architecture, and software optimization required. Every component serves a single governing constraint: the system must be fast enough, because in industrial sorting, fast enough is the difference between working and not working.